Note: This portfolio site was launched on 30th March 2025. More stories, resources, and portfolio updates will be added progressively as I move forward and subject to available personal time.

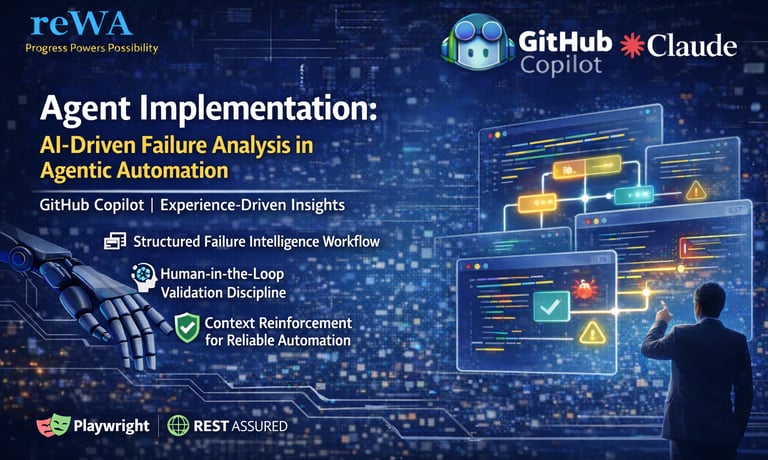

Agent Implementation: AI-Driven Failure Analysis in Agentic Automation

AI-driven failure analysis transforms traditional debugging into structured, context-aware intelligence. This article explores disciplined workflows, human-in-the-loop validation, and documentation reinforcement in open-source automation environments.

TECHNICAL

Kiran Kumar Edupuganti

2/23/20265 min read

Agent Implementation: AI-Driven Failure Analysis in Agentic Automation

GitHub Copilot | Experience-Driven Insights

Human-in-the-Loop Engineering

Shifting from Traditional Debugging to Intelligent Failure Analysis

The portfolio reWireAdaptive, in association with the @reWirebyAutomation channel, presents an article on Agent Implementation. This article, titled "Agent Implementation: AI-Driven Failure Analysis in Agentic Automation", aims to explore and adopt Agent Implementation in the AI-Driven Failure Analysis.

Introduction

This article explains how failure analysis evolves when automation development shifts into an AI-enabled environment. In traditional automation, debugging is event-driven, log-focused, and dependent on individual analysis skills. In agentic automation, failure handling becomes structured, context-aware, and supported by AI tools integrated into development workflows.

The concepts discussed here apply to any open-source automation framework such as Playwright, RestAssured, Selenium, Cypress, WebdriverIO, or custom-built systems. The approach is based on practical implementation experience while working with AI-enabled development and structured debugging workflows.

The focus of this article is to explain how AI-driven failure analysis improves clarity, reduces repeated effort, and builds stronger automation systems when supported by disciplined implementation practices.

Key focus areas:

Moving from event-driven debugging to structured failure analysis

Using AI tools for log summarization and root cause exploration

Maintaining human-in-the-loop validation

Building reusable failure intelligence

Reinforcing context through documentation

Why This Matters to the Test Automation Forum

In most open-source automation environments, failures are common. UI changes, API updates, environment differences, timing issues, and configuration shifts all contribute to instability. Debugging in such systems often consumes a large portion of development time.

Traditional event-driven debugging begins only after a failure occurs. Engineers review logs manually, inspect stack traces, compare changes, and attempt fixes. While effective, this process is repetitive and depends heavily on individual experience.

AI-enabled development introduces structured support. Failures can be summarized quickly. Patterns can be detected across multiple execution runs. Similar issues can be grouped. Potential root causes can be highlighted early.

This matters because teams using open-source automation need structured methods to improve debugging efficiency while maintaining control and reliability.

Why this is important:

Reduces manual log analysis effort

Improves clarity in understanding failures

Encourages structured debugging discipline

Prevents repeated investigation of similar issues

Supports faster stabilization of automation suites

Implementation Reality in Open-Source Automation

The approach discussed here is not tool-specific. It applies to any open-source automation stack.

In real-world automation projects, failures arise from multiple layers:

Assertion mismatches

Locator instability

API contract changes

Authentication or token issues

Environment configuration drift

Test data inconsistencies

AI-driven analysis helps process large volumes of failure data quickly. It can summarize long logs, correlate execution history, and identify patterns that may not be obvious during manual inspection.

Effective implementation requires structured prompts, clear context, and disciplined workflows.

Implementation characteristics:

Works across UI, API, and integration layers

Supports failure grouping and trend analysis

Requires structured context input

Improves over time with documentation reinforcement

Needs validation before code changes

Human-in-the-Loop Engineering

Human-in-the-loop engineering ensures that AI-generated insights are reviewed, validated, and applied carefully. AI tools can generate hypotheses and summarize patterns, but interpretation and decision-making remain controlled by the engineer.

Human validation ensures that:

Suggested fixes align with system specifications

Business rules are preserved

Assumptions are verified

Changes do not introduce instability

AI operates based on available context. If context is incomplete or unclear, output may be inaccurate. Human review maintains reliability.

Human-in-the-loop responsibilities:

Reviewing AI-generated root cause analysis

Confirming logical correctness

Approving final code changes

Updating documentation after resolution

Refining prompts for better future output

Human validation strengthens reliability and consistency.

Traditional Event-Driven Debugging vs AI-Driven Failure Analysis

Traditional event-driven debugging begins when a failure occurs. Engineers review stack traces, scan logs line by line, compare recent code changes, and attempt fixes based on experience. Each failure is often treated as a separate investigation, even if similar issues occurred earlier.

In this model, debugging is triggered by events. Historical execution data may not be analyzed systematically. Patterns across failures can remain unnoticed because logs are reviewed independently.

AI-driven failure analysis introduces structure. Instead of scanning logs manually, AI tools summarize key failure points. They identify repeated error patterns across multiple runs. They correlate failures with configuration or code changes. They generate root cause hypotheses based on available context and historical information.

Traditional event-driven debugging characteristics:

Manual log reading

Sequential error tracing

Trial-and-error fixes

Limited cross-run comparison

Knowledge retained at the individual level

Investigation triggered only after failure

AI-driven failure analysis characteristics:

Automated log summarization

Pattern recognition across executions

Root cause hypothesis generation

Context-aware comparison with previous failures

Structured reasoning supported by documentation

Trend-based investigation approach

This shift improves consistency and builds structured failure intelligence over time.

Failure Intelligence Workflow

Failure Intelligence Workflow is a structured method that transforms individual failures into reusable system knowledge. Instead of treating each failure as an isolated event, the workflow captures context, analyzes patterns, validates conclusions, and reinforces documentation for future accuracy.

This structured approach ensures that every failure contributes to improving the automation system.

Step 1: Failure Detection

A test fails during execution in any open-source automation framework.

Step 2: Structured Artifact Collection

Gather all relevant information:

Complete logs

Stack traces

Request and response data (for APIs)

Test inputs and parameters

Environment details

Execution history

Accurate artifact collection ensures complete analysis.

Step 3: AI-Assisted Contextual Analysis

Using AI tools integrated into IDEs:

Summarize the failure clearly

Identify probable root causes

Detect repeated patterns across executions

Highlight configuration mismatches

Compare with previously documented issues

This reduces manual log scanning and improves clarity.

Step 4: Human Validation

Engineer evaluates:

Accuracy of AI interpretation

Alignment with specifications

Impact of proposed changes

Risk of unintended side effects

Validation ensures safe and reliable implementation.

Step 5: Controlled Fix Implementation

Apply fixes in:

Test logic

Synchronization strategy

Assertions

Configuration files

API validation logic

Changes are implemented carefully and verified through reruns.

Step 6: Documentation and Context Reinforcement

After resolution:

Update the README or technical documentation

Record the root cause clearly

Document edge cases

Capture failure patterns

Note environment dependencies

This step strengthens future AI sessions and improves agent accuracy.

Failures become structured knowledge rather than repeated issues.

Document Analysis and Context Reinforcement

Document analysis plays a critical role in improving AI-assisted debugging accuracy. When documentation is structured and updated regularly, AI tools operate with stronger contextual understanding.

Well-maintained documentation supports:

Clear specification alignment

Reduced ambiguity

Improved AI response quality

Faster onboarding

Reduced repetition of resolved issues

Documentation updates should include:

Root cause explanations

Known edge cases

Environment dependencies

Test data constraints

Common failure patterns

Over time, documentation becomes a context engine that supports intelligent debugging and increases solution reliability. – This is my perception

Stay tuned for the next article from rewireAdaptive portfolio

This is @reWireByAutomation, (Kiran Edupuganti) Signing Off!

With this, @reWireByAutomation has published a “Agent Implementation: AI-Driven Failure Analysis in Agentic Automation"